CDS-Courant Undergraduate Research Program

(CURP)

PROGRAM DATES: JANUARY 24 – MAY 18, 2022

LOCATION: RESEARCH PROJECTS WILL TAKE PLACE REMOTELY

FELLOWSHIP AWARD: $3,500

APPLICATION DEADLINE: OCTOBER 31, 2021

The NYU Center for Data Science

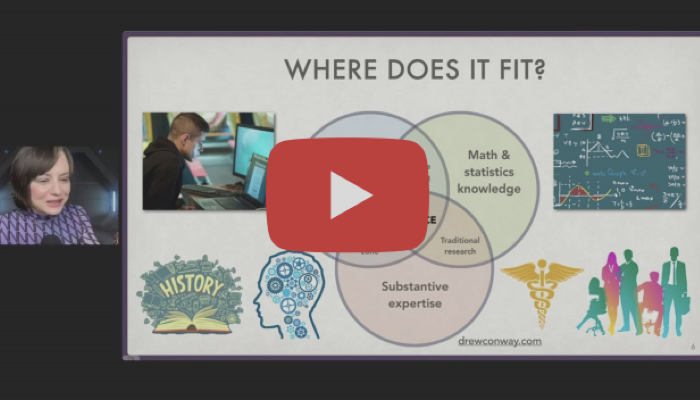

The Center for Data Science (CDS) is the focal point for New York University’s university-wide efforts in Data Science. The Center was established in 2013 to advance NYU’s goal of creating a world-leading Data Science training and research facility, and arming researchers and professionals with the tools to harness the power of Big Data. Today, CDS counts 20 jointly appointed interdisciplinary faculty housed on three floors of our magnificent 60 5th Avenue building, one of New York City’s historic properties. It is home to a top-ranked MS in Data Science program, one of the first PhD programs in Data Science, and a new undergraduate program in Data Science, as well as a lively Fellow and Postdoctoral program. It has over 70 associate and affiliate faculty from 25 departments in 9 schools and units. With cross-disciplinary research and innovative educational programs, CDS is shaping the new field of Data Science.

Featured

Upcoming Events

- Math and Data (MaD) Seminar: Tristan Buckmaster (NYU)

April 18, 2024, 2:00 pm - Data Science Lunch Seminar Series: Umang Bhatt (NYU)

April 24, 2024, 12:30 pm - Math and Data (MaD) Seminar: Matus Telgarsky

April 25, 2024, 2:00 pm - Math and Data (MaD) Seminar: Zhuoran Yang

May 2, 2024, 2:00 pm - Math and Data (MaD) Seminar: Guy Bresler (MIT)

May 9, 2024, 11:00 am

Events in April–July 2024

| MonMonday | TueTuesday | WedWednesday | ThuThursday | FriFriday | SatSaturday | SunSunday |

|---|---|---|---|---|---|---|

AprilApril 1, 2024 |

April 2, 2024

|

April 3, 2024(1 event)

12:30 pm: Data Science Lunch Seminar: Ekin Akyürek (MIT)12:30 pm: Data Science Lunch Seminar: Ekin Akyürek (MIT) – |

April 4, 2024

|

April 5, 2024

|

April 6, 2024

|

April 7, 2024

|

April 8, 2024

|

April 9, 2024

|

April 10, 2024(1 event)

12:30 pm: Data Science Lunch Seminar Series: Julian Michael (NYU)12:30 pm: Data Science Lunch Seminar Series: Julian Michael (NYU) – |

April 11, 2024(1 event)

2:00 pm: MaD Seminar: Gabriel Peyré2:00 pm: MaD Seminar: Gabriel Peyré – |

April 12, 2024

|

April 13, 2024

|

April 14, 2024

|

April 15, 2024

|

April 16, 2024

|

April 17, 2024(2 events)

9:00 am: AI, Misinformation, and Policy Seminar Series: Lynnette Hui Xian Ng9:00 am: AI, Misinformation, and Policy Seminar Series: Lynnette Hui Xian Ng – 12:30 pm: Data Science Lunch Seminar: Jungwoo Lee (Seoul National University)12:30 pm: Data Science Lunch Seminar: Jungwoo Lee (Seoul National University) – |

April 18, 2024(1 event)

2:00 pm: Math and Data (MaD) Seminar: Tristan Buckmaster (NYU)2:00 pm: Math and Data (MaD) Seminar: Tristan Buckmaster (NYU) – |

April 19, 2024

|

April 20, 2024

|

April 21, 2024

|

April 22, 2024

|

April 23, 2024

|

April 24, 2024(1 event)

12:30 pm: Data Science Lunch Seminar Series: Umang Bhatt (NYU)12:30 pm: Data Science Lunch Seminar Series: Umang Bhatt (NYU) – |

April 25, 2024(1 event)

2:00 pm: Math and Data (MaD) Seminar: Matus Telgarsky2:00 pm: Math and Data (MaD) Seminar: Matus Telgarsky – |

April 26, 2024

|

April 27, 2024

|

April 28, 2024

|

April 29, 2024

|

April 30, 2024

|

MayMay 1, 2024 |

May 2, 2024(1 event)

2:00 pm: Math and Data (MaD) Seminar: Zhuoran Yang2:00 pm: Math and Data (MaD) Seminar: Zhuoran Yang – |

May 3, 2024

|

May 4, 2024

|

May 5, 2024

|

May 6, 2024

|

May 7, 2024

|

May 8, 2024

|

May 9, 2024(1 event)

11:00 am: Math and Data (MaD) Seminar: Guy Bresler (MIT)11:00 am: Math and Data (MaD) Seminar: Guy Bresler (MIT) – |

May 10, 2024

|

May 11, 2024

|

May 12, 2024

|

May 13, 2024

|

May 14, 2024

|

May 15, 2024

|

May 16, 2024

|

May 17, 2024

|

May 18, 2024

|

May 19, 2024

|

May 20, 2024

|

May 21, 2024

|

May 22, 2024

|

May 23, 2024

|

May 24, 2024

|

May 25, 2024

|

May 26, 2024

|

May 27, 2024

|

May 28, 2024

|

May 29, 2024

|

May 30, 2024

|

May 31, 2024

|

JuneJune 1, 2024 |

June 2, 2024

|

June 3, 2024

|

June 4, 2024

|

June 5, 2024

|

June 6, 2024

|

June 7, 2024

|

June 8, 2024

|

June 9, 2024

|

June 10, 2024

|

June 11, 2024

|

June 12, 2024

|

June 13, 2024

|

June 14, 2024

|

June 15, 2024

|

June 16, 2024

|

June 17, 2024

|

June 18, 2024

|

June 19, 2024

|

June 20, 2024

|

June 21, 2024

|

June 22, 2024

|

June 23, 2024

|

June 24, 2024

|

June 25, 2024

|

June 26, 2024

|

June 27, 2024

|

June 28, 2024

|

June 29, 2024

|

June 30, 2024

|

JulyJuly 1, 2024 |

July 2, 2024

|

July 3, 2024

|

July 4, 2024

|

July 5, 2024

|

July 6, 2024

|

July 7, 2024

|

July 8, 2024

|

July 9, 2024

|

July 10, 2024

|

July 11, 2024

|

July 12, 2024

|

July 13, 2024

|

July 14, 2024

|

July 15, 2024

|

July 16, 2024

|

July 17, 2024

|

July 18, 2024

|

July 19, 2024

|

July 20, 2024

|

July 21, 2024

|

July 22, 2024

|

July 23, 2024

|

July 24, 2024

|

July 25, 2024

|

July 26, 2024

|

July 27, 2024

|

July 28, 2024

|

July 29, 2024

|

July 30, 2024

|

July 31, 2024

|

AugustAugust 1, 2024 |

August 2, 2024

|

August 3, 2024

|

August 4, 2024

|